So the problem isn’t the technology. The problem is unethical big corporations.

Same as it ever was…

depends. for “AI” “art” the problem is both terms are lies. there is no intelligence and there is no art.

there is no intelligence and there is no art.

People said exact same thing about CGI, and photography before. I wouldn’t be surprised if somebody scream “IT’S NOT ART” at Michaelangelo or people carving walls of temples in ancient Egypt.

AI is a tool used by a human. The human using the tools has an intention, wants to create something with it.

It’s exactly the same as painting digital art. But instead o moving the mouse around, or copying other images into a collage, you use the AI tool, which can be pretty complex to use to create something beautiful.

Do you know what generative art is? It existed before AI. Surely with your gatekeeping you think that’s also no art.

I’m so sick of this. there are scenarios in which so-called “AI” can be used as a tool. for example, resampling. it’s dodgy, but whatever, let’s say the tech is perfected and it truly analyzes data to give a good result rather than stealing other art to match.

but a tool is something that does exactly what you intend for it to do. you can’t say 100 dice are collectively “a tool that outputs 600” because you can sit there and roll them for as long as it takes for all of them to turn up sixes, technically. and if you do call it that, that’s still a shitty tool, and you did nothing worth crediting to get 600. a robot can do it. and it does. and that makes it not art.

So do you not what generative art is. And you pretend to stablish catedra on art.

Generative art, that existed before even computers, is s form of art in which a algorithm created a form of art, and that algorithm can be repeated easily. Humans can replicate that algorithm, but computers can too, and generative art is mostly used with computers because obvious reasons. Those generative algorithms can be deterministic or non deterministic.

And all this before AI, way before.

AI on its essence is just a really complex and large generative algorithm, that some people do not understand and this are afraid of it, like people used to be afraid of eclipses.

Also, you seems not to know that photographs also take hundreds or thousands of pictures with just pressing a button and just select the good ones.

cameras do not make random images. you know exactly what you’re getting with a photograph. the reason you take multiples is mostly for timing and lighting. also, rolling a hundred dice is not the same as painting something 100 times and picking the best one, nor is it like photographing it. the fact that you’re even making this comparison is insane.

Define art.

Any work made to convey a concept and/or emotion can be art. I’d throw in “intent”, having “deeper meaning”, and the context of its creation to distinguish between an accounting spreadsheet and art.

The problem with AI “art” is it’s produced by something that isn’t sentient and is incapable of original thought. AI doesn’t understand intent, context, emotion, or even the most basic concepts behind the prompt or the end result. Its “art” is merely a mashup of ideas stolen from countless works of actual, original art run through an esoteric logic network.

AI can serve as a tool to create art of course, but the further removed from the process a human is the less the end result can truly be considered “art”.

As a thought experiment let’s say an artist takes a photo of a sunset. Then the artist uses AI to generate a sunset and AI happens to generate the exact same photo. The artist then releases one of the two images with the title “this may or may not be made by AI”. Is the released image art or not?

If you say the image isn’t art, what if it’s revealed that it’s the photo the artist took? Does is magically turn into art because it’s not made by AI? If not does it mean when people “make art” it’s not art?

If you say the image is art, what if it’s revealed it’s made by AI? Does it magically stop being art or does it become less artistic after the fact? Where does value go?

The way I see it is that you’re trying to gatekeep art by arbitrarily claiming AI art isn’t real art. I think since we’re the ones assigning a meaning to art, how it is created doesn’t matter. After all if you’re the artist taking the photo isn’t the original art piece just the natural occurrence of the sun setting. Nobody created it, there is no artistic intention there, it simply exists and we consider it art.

there’s something’s highly suspect about someone not understanding the difference between art made by a human being and some output spit out by a dumb pixel mixer. huge red flag imo.

and yes, the value does go. because we care about origin and intent. that’s the whole point.

if the original Mona Lisa were to be sold for millions of dollars, and then someone reveals that it was not the original Mona Lisa but a replica made last week by some dude… do you think the buyer would just go “eh it looks close enough”? no they would sue the fuck out of the seller and guess what, the painting would not be worth millions anymore. it’s the same painting. the value is changed. ART IS NOT A PRODUCT.

i won’t, but art has intent. AI doesn’t.

Pollock’s paintings are art. a bunch of paint buckets falling on a canvas in an earthquake wouldn’t make art, even if it resembled Pollock’s paintings. there’s no intent behind it. no artist.

How can you tell if an entity has intent or not?

comes with having a brain and knowing what intent means.

Yes, but where do you draw a line in AI of having an intent. Surely AGI has intent but you say current AIs do not.

yes because there is no intelligence. AI is a misnomer. intent needs intelligence.

Disagree. The technology will never yield AGI as all it does is remix a huge field of data without even knowing what that data functionally says.

All it can do now and ever will do is destroy the environment by using oodles of energy, just so some fucker can generate a boring big titty goth pinup with weird hands and weirder feet. Feeding it exponentially more energy will do what? Reduce the amount of fingers and the foot weirdness? Great. That is so worth squandering our dwindling resources to.

Idk. I find it a great coding help. IMO AI tech have legitimate good uses.

Image generation have algo great uses without falling into porn. It ables to people who don’t know how to paint to do some art.

Wow, great, the AI is here to defend itself. Working about as well as you’d think.

What?

I really don’t know whats going about the Anti-AI people. But is getting pretty similar to any other negationism, anti-science, anti-progress… Completely irrational and radicalized.

Sorry to hurt your fefes, but I don’t like theft and that is what AI content ALL is. How does it “know” how to program? Code stolen form humans. How does it speak? Words stolen from humans. How does it draw? Art stolen from humans.

Until this shit stops being built on a mountain of stolen data and stolen livelihoods, the argument is over. I don’t care if you like stealing money from artists so that you can pretend you had any creative input into an AIs art output. You’re stealing the work of normal people and think it’s okay because it was already stolen once before by the billionaires who are now selling it to you.

Intelectual property is a capitalist invention.

Human culture is to be shared.

Oh right, we live under communism, where everyone’s needs are cared for. My bad

Oh wait, we aren’t and you are just a shithead who, once again, wants to tell me that stealing from other workers is good.

Technology is a cultural creation, not a magic box outside of its circumstances. “The problem isn’t the technology, it’s the creators, users, and perpetuators” is tautological.

And, importantly, the purpose of a system is what it does.

Technology is a product of science. The facts science seeks to uncover are fundamental universal truths that aren’t subject to human folly. Only how we use that knowledge is subject to human folly. I don’t think open source or open weights models are a bad usage of that knowledge. Some of the things corporations do are bad or exploitative uses of that knowledge.

You should really try and consider what it means for technology to be a cultural feature. Think, genuinely and critically, about what it means when someone tells you that you shouldn’t judge the ethics and values of their pursuits, because they are simply discovering “universal truths”.

And then, really make sure you ponder what it means when people say the purpose of a system is what it does. Why that might get brought up in discussions about wanton resource spending for venture capitalist hype.

That’s not at all what I am doing, or what scientists and engineers do. We are all trained to think about ethics and seek ethical approval because even if knowledge itself is morally neutral the methods to obtain that knowledge can be truly unhinged.

Scientific facts are not a cultural facet. A device built using scientific knowledge is also a product of the culture that built it. Technology stands between objective science and subjective needs and culture. Technology generally serves some form of purpose.

Here is an example: Heavier than air flight is a possibility because of the laws of physics. A Boeing 737 is a specific product of both those laws of physics and of USA culture. It’s purpose is to get people and things to places, and to make Boeing the company money.

LLMs can be used for good and ill. People have argued they use too much energy for what they do. I would say that depends on where you get your energy from. Ultimately though it doesn’t use as much as people driving cars or mining bitcoin or eating meat. You should be going after those first if you want to persecute people for using energy.

It does not appear to me that you have even humored my request. I’m actually not even confident you read my comment given your response doesn’t actually respond to it. I hope you will.

Think, genuinely and critically, about what it means when someone tells you that you shouldn’t judge the ethics and values of their pursuits, because they are simply discovering “universal truths”.

No scientist or engineer as ever said that as far as I can recall. I was explaining that even for scientific fact which is morally neutral how you get there is important, and that scientists and engineers acknowledge this. What you are asking me to do this based on a false premise and a bad understanding of how science works.

And then, really make sure you ponder what it means when people say the purpose of a system is what it does.

It both is and isn’t. Things often have consequences alongside their intended function, like how a machine gets warm when in use. It getting warm isn’t a deliberate feature, it’s a consequence of the laws of thermodynamics. We actually try to minimise this as it wastes energy. Even things like fossil fuels aren’t intended to ruin the planet, it’s a side effect of how they work.

It’s a very common talking point now to claim technology exists independent of the culture surrounding it. It is a lie to justify morally vacant research which the, normally venture capitalist, is only concerned about the money to be made. But engineers and scientists necessarily go along with it. It’s not not your problem because we are the ones executing cultural wants, we are a part of the broader culture as well.

The purpose of a system is, absolutely, what it does. It doesn’t matter how well intentioned your design and ethics were, once the system is doing things, those things are its purpose. Your waste heat example, yes, it was the design intent to eliminate that, but now that’s what it does, and the engineers damn well understand that its purpose is to generate waste heat in order to do whatever work it’s doing.

This is a systems engineering concept. And it’s inescapable.

Not only the pollution.

It has triggered an economic race to the bottom for any industry that can incorporate it. Employers will be forced to replace more workers with AI to keep prices competitive. And that is a lot of industries, especially if AI continues its growth.

The result is a lot of unemployment, which means an economic slowdown due to a lack of discretionary spending, which is a feedback loop.There are only 3 outcomes I can imagine:

- AI fizzles out. It can’t maintain its advancement enough to impress execs.

- An unimaginable wealth disparity and probably a return to something like feudalism.

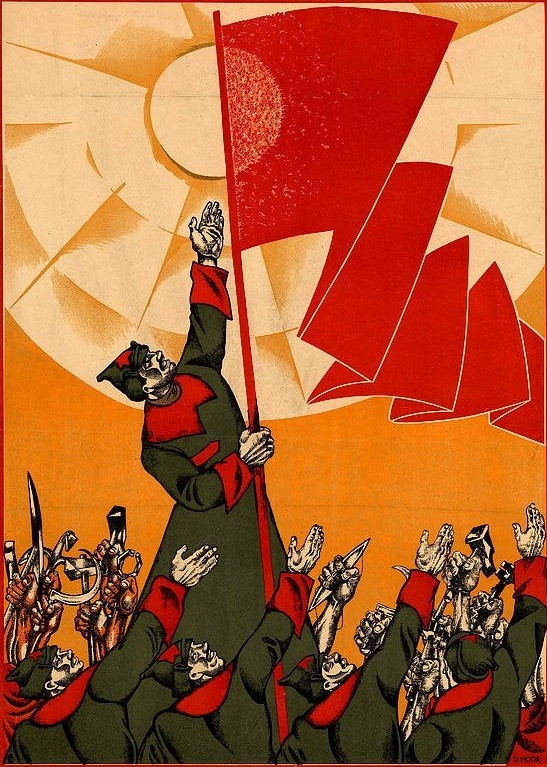

- social revolution where AI is taken out of the hands of owners and placed into the hands of workers. Would require changes that we’d consider radically socialist now, like UBI and strong af social safety nets.

The second seems more likely than the third, and I consider that more or less a destruction of humanity

It’s been a while since I’ve seen this meme template being used correctly

Turns out, most people think their stupid views are actually genius

It’s wild how we went from…

Critics: “Crypto is an energy hog and its main use case is a convoluted pyramid scheme”

Boosters: “Bro trust me bro, there are legit use cases and energy consumption has already been reduced in several prototype implementations”

…to…

Critics: “AI is an energy hog and its main use case is a convoluted labor exploitation scheme”

Boosters: “Bro trust me bro, there are legit use cases and energy consumption has already been reduced in several prototype implementations”

They’re not really comparable. Crypto and blockchain were good solutions looking for problems to solve. They’re innovative and cool? Sure, but they never had a widescale use. AI has been around for awhile, it just got recently rebranded as artificial intellectual, the same technologies were called algorithms a few years ago… And they basically run the internet and the global economy. Hospitals, schools, corporations, governments, the militaries, etc all use them. Maybe certain uses of AI are dumb, but trying to pretend that the thing as a whole doesn’t have, or rather already has, genuine uses is just dumb

I feel like you’re being incredibly generous with the usage of AI here. I feel as though the post and comment above refer to LLM/image generation AI. Those “types of ‘AI’” certainly don’t run all those things.

The term AI is very vague because intelligence is an inherently subjective concept. If we’re defining AI as something that has consciousness then it doesn’t exist, but if we’re defining it as a task that a computer can do on it’s own, then virtually everything that is automated is run by AI.

Even with generative AI models, they’ve been around for a while too. For example, lot of the news articles you read, especially about the weather or news aren’t written by actual people, they’re AI generated. Another example would be scientific simulations, they use AI to generate a bunch of possible scenarios based on given parameters. Yet another example would be the gaming industry, what do you think generates Minecraft worlds? The point here is that AI has been around for awhile and is already being used everywhere. What we’re seeing with chatGPT and these other new models is that these models are now being released for public access. It’s like democratization of AI, and a lot of good and bad things are bound to come of it. We’re at the infancy stage of this now, but just like with the world wide web before it, these technologies are going to fundamentally change how we do many things from now on.

We can’t fight technology, that’s a losing battle. These AIs are here and they’re here to stay. So strap on and enjoy the ride.

I think you misunderstood me, I’m not trying to make some point about “LLMs aren’t ‘real AI’” or even what is and is not AI. I’m just saying the post is talking about that type of AI specifically and I wouldn’t say those types are controlling that much of the world.

Stupid AI will destroy humanity. But the important thing to remember is that for a brief, shining moment, profit will be made.

Line go up 🤓

The root problem is capitalism though, if it wasn’t AI it would be some other idiotic scheme like cryptocurrency that would be wasting energy instead. The problem is with the system as opposed to technology.

Right, but the technology has the system’s philosophy baked into it. All inventions encourage a certain way of seeing the world. It’s not a coincidence that agriculture yields land ownership, mass production yields wage labor, or in this case fuzzy plagiarism machines yield a transhuman death cult.

Sure, technology is a product of the culture and it in turn influences how the culture develops, there’s a dialectical relationship there.

So why take the heat off of AI, as if profiting from mass plagiarism is different when it has an API instead of flesh and bone?

Because as I explained in my original comment, if it’s not AI it’s going to be some other bullshit.

The root problem is human ideology. I do not know if we can have humans without ideology.

This sounds like some Žižekian nonsense. Capitalism’s Court Jester: Slavoj Žižek

I’m open to trying a non-Capitalist system, but I’m pretty sure hierarchical bullshit will happen and the majority will end up being exploited.

Whether anyone else is open to it before humans extinguish themselves, I don’t know.

Nah, human ideology is much broader than a single economic system. The fact that people who live under capitalism can’t understand this just shows the power of indoctrination.

I’m not a fan of ideology.

What you’re saying is that you’re not self aware enough to realize that you have an ideology. Everyone has a world view that they develop to understand how the world works, and every world view necessarily represents a simplification of reality. Forming abstractions is how our minds deal with complexity.

I’m autistic.

Do you think people should be treated with respect? Do you think there should be consideration for your condition so you are not exempt from certain events, activities, opportunities?

These are matters of ideology. If you say yes to it, it is ideological in the same way when you say no to it. There is no inherent objective truth to these value questions.

Same for the economy. It doesn’t matter if you think that growth should be the main objective, or that equal opportunity should be the focus or sustainability or other things. You will have to make a value judgement and the sum of these values represent your ideology.

There is no inherent objective truth to these value questions.

I disagree. These values are based on objective observations.

This conveniently ignores the progress being made with smaller and smaller models in the open source community.

As with literally every technical progress, tech itself is no problem, capitalism usage of it is.

The problem is the concentration of power, Sam “regulate me daddy” Altman’s plan is to get the government to create a web of regulation that makes it so only the big tech giants have access to the uncensored models.